Show summary Hide summary

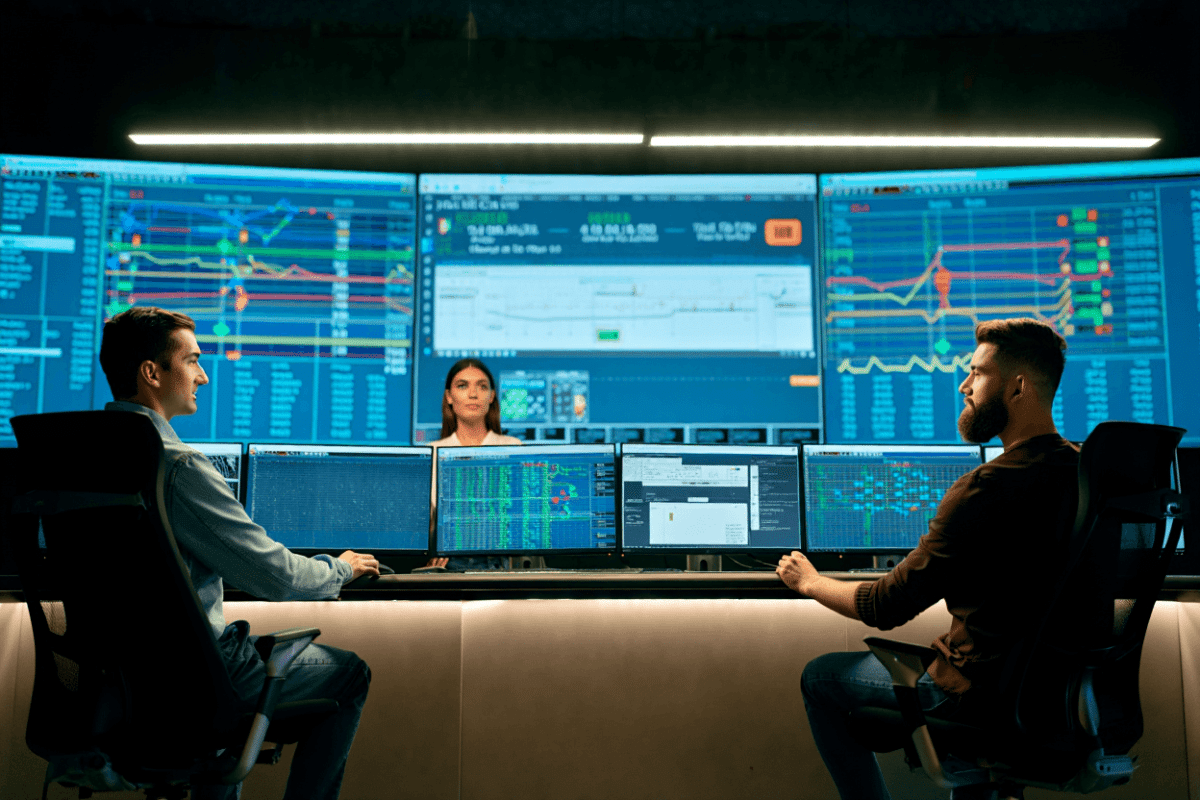

The European Parliament has told staff to keep built‑in AI assistants turned off on work devices, citing unresolved security and confidentiality concerns — a decision that matters now as lawmakers handle sensitive files and the European Union debates rules that could change how tech companies use Europeans’ data. The move underscores growing unease in Brussels about relying on U.S.-based AI services when legal access and data‑use practices remain unclear.

An internal IT message, reviewed by Politico, says parliamentary technicians cannot yet vouch for the safety of information sent to third‑party AI servers and that the degree to which those platforms capture or reuse uploaded content is still under review. For that reason, the note concluded, disabling built‑in AI features is the prudent choice for the moment.

What officials fear

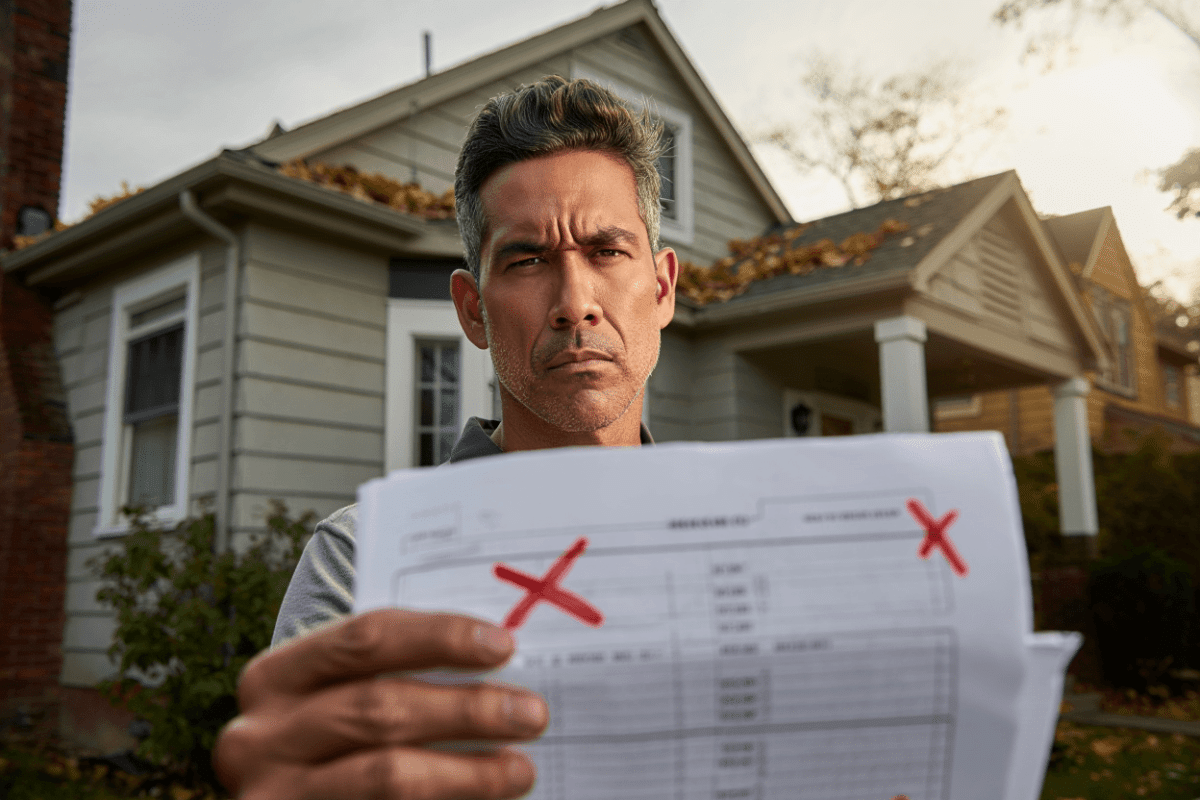

At the core of the decision are two interlinked worries: the possibility that foreign authorities could demand user data from U.S. AI providers, and the way many large language models incorporate user inputs into ongoing training. That combination, officials argue, can turn internal memos, constituent correspondence and draft legislation into information that is either accessible to external parties or reused by the very systems lawmakers rely on.

PSEG beats Q1 earnings expectations with $1.55 per share, named to Dow Jones Index for 18th year

SPCX files for IPO on Nasdaq, targets June 12 listing with $75B raise

Examples singled out by the parliament include consumer-facing assistants such as ChatGPT, Microsoft’s Copilot and Anthropic’s Claude. Those tools typically route text to remote servers operated by the companies that develop them — a flow of data that raises legal and operational questions when used for official business.

Risks spelled out

- Data disclosure: Information sent to a cloud‑hosted model can be retained or processed in ways the institution cannot fully control.

- Legal compulsion: U.S. courts or authorities can compel American firms to hand over stored or accessible user information.

- Model reuse: Inputs supplied by one user may be incorporated into training data and influence model outputs seen by others.

- Operational exposure: Confidential deliberations and draft texts are at stake when everyday assistants are used in professional contexts.

Those concerns resonate across the EU, where data protection has long been a bedrock policy. Yet Brussels is also wrestling with how to regulate AI development without hamstringing innovation. Last year the European Commission proposed changes that would, in some instances, make it easier for companies to use European data to train AI systems — a suggestion that drew criticism from privacy advocates who say it would tip the balance toward big tech.

That policy debate takes on sharper significance against recent U.S. enforcement activity. According to reporting in recent weeks, the Department of Homeland Security issued a series of subpoenas to major technology platforms seeking information on users who publicly criticized the Trump administration. In several instances, firms including Google, Meta and Reddit responded to requests even where no judge had ordered disclosure.

Why this matters for lawmakers and the public

For elected officials, the consequences are practical and immediate: sensitive exchanges with constituents, internal legal advice and early drafts of legislation could be exposed or become part of vendors’ datasets. For citizens, the issue raises questions about the safety of sharing personal concerns or documents with their representatives when those communications might be processed by third‑party AI.

The parliament’s temporary ban on embedded AI features is a defensive step while technical, legal and contractual details are sorted. It also signals a broader reassessment in Europe of dependence on foreign cloud services for government business — a conversation that will influence procurement rules, vendor contracts and possibly the push for on‑premises or EU‑based AI alternatives.

From an institutional perspective, the most immediate implications will be:

- Tighter IT policies restricting how and where AI tools may be used on official devices;

- Demand for clearer contractual guarantees from vendors about data handling and non‑reuse for model training;

- Increased interest in localized, private AI deployments that do not transmit sensitive inputs beyond secure, internal systems.

As scrutiny intensifies, the debate in Brussels will test whether Europe can simultaneously protect privacy, preserve national security interests and foster responsible AI innovation — all while relying on services that remain, in many cases, subject to foreign legal regimes.